So you need to draw curved and bent pipe-tube-things.

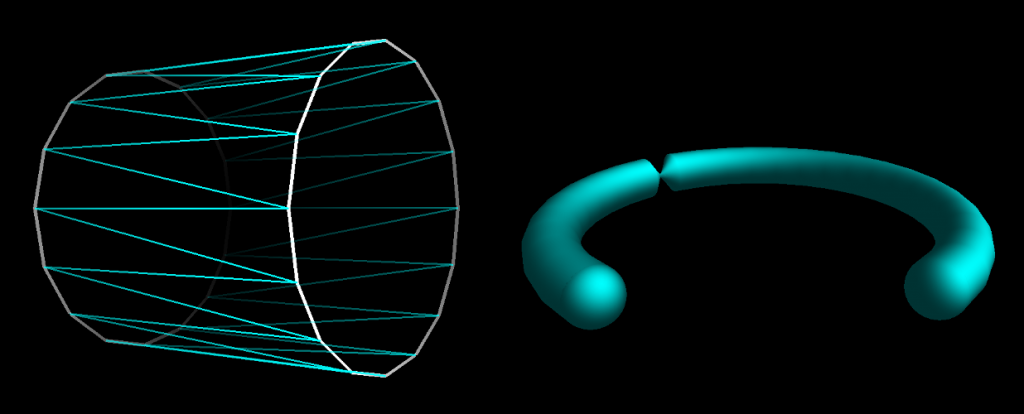

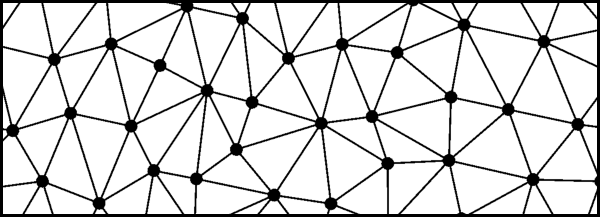

You can make a straight section with two rings of points; just orient one ring like so, the other ring like so, and draw triangles between them. For a curve, just string a bunch of short straight pieces together.

Almost but not quite. Why the hell is there that pinched part in the curved pipe?

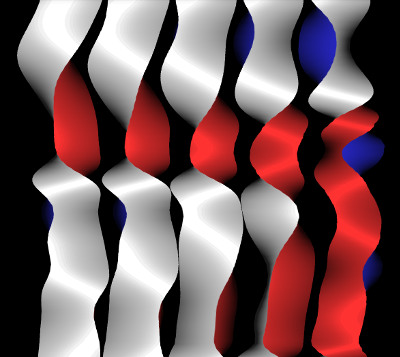

The problem is that the orientation of the rings is not as simple as “normal to the pipe’s tangent”. But a ring is a circular-symmetrical thing, right, so rotation about the pipe’s tangent shouldn’t really matter. Right?