Blats is a game played with four 6-sided dice. It’s a solitaire game of luck, superstition, and class warfare. For top secret reasons I decided to build a Blats-playing Lucky Supercomputer.

Blats is a game played with four 6-sided dice. It’s a solitaire game of luck, superstition, and class warfare. For top secret reasons I decided to build a Blats-playing Lucky Supercomputer.

The exact rules aren’t relevant. To fully simulate the game would involve an AI that I haven’t gotten around to making yet. So it’s just four dice rolled together here; here being a cluster of tiny metaverses.

The first step was to sniff out any 3D rigid body physics engines that could make the simulation painless. The Bullet physics library stood out immediately. I got the source; right there is an interactive demo of a bunch of cubes falling onto flat ground. Ha. It’s practically done. So I tinkered with the demo: four cubes, this friction, that friction, this gravity, some pegs in the ground just to mix things up. Quality of randomness would depend on proper bouncing and tumbling. Eventually I got it looking and feeling dice-like.

To roll the dice, each one gets a random linear and torque impulse, with the linear impulses all directed at a common point above the middle of the playing area. This way the dice bang together and get some minimum of interaction on each roll. Not randomizing their positions and orientations each roll allows some state of chaos to carry over from the previous rolls. Whether that really affects the raw output is irrelevant. It’s luckier that way.

Automatically rolling is easy since Bullet, for optimization purposes I assume, keeps track of whether objects are at rest. The main process is simple: when all dice come to rest, interpret their values and then roll them, repeat. Reading a die’s value is as simple as determining which of the six faces’ normals has the greatest dot product with a unit “up” vector. I made a subtle mistake here, at first. Originally I interpreted the dice by comparing one face normal to the six unit vectors +x, -x, +y, -y, etc. While this does divide up the space of die orientations into six equal regions, and the numeric results were perfectly good, it doesn’t quite match with how real dice work. For instance, if the one face that’s used for measurement is on the side of a resting die, the interpreted value can be changed by rotating the die about the vertical axis, and that’s obviously not how it’s done on Earth.

The visualization was useful for tuning and debugging, but it has no place in the actual deployment. The Bullet demo app GUI is put in place with basically a single class inheritance, so it’s very easy to dispose of. So here we have it: a console program that quietly runs a physics simulation of dice rolling and periodically reports rolls.

I’ve got the Lucky all bound up in a statically linked executable. Now for the Supercomputer. The objective is not necessarily to achieve extreme processing throughput; the point is to make a supercomputer-like-array-of-computers. If the performance is awful, that’s extra hilarious, adorable and/or pathetic. Enter, the Visara NCT 1783 thin client.

I have four of these thin client things. Each sports a 300MHz Geode GX1 and 32MB RAM except for one which is stacked with 128MB RAM. They have built-in everything and they’re pretty handy. I’ve made them into firewalls and jukeboxes and other, stranger appliances, and now they will unite as a supercomputer.

There’s no space or power for desktop hard drives, and I don’t have enough flash disks to go around, so network booting is the only option. PXELINUX makes that painless: basic DHCP server settings, a kernel and filesystem image sitting on an ftp server, and the machines boot right up. I found ttylinux to be a good base operating system here: ssh and ftp servers out of the box, several megabytes free on the filesystem, very brass tacks. With the dice simulation incorporated in the initrd and some netcat, each machine becomes a networked dice roll server.

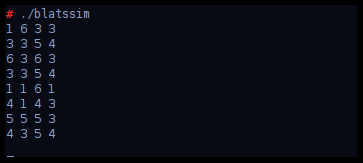

A python script connects to all the nodes and outputs all the roll results together. Basically the supercomputer is complete: a bank of four computers laboriously rolls sets of four dice, and the sum results are a unix pipe away from endless possibilities. For instance the results can scroll in a terminal. Woo. Hoo.

So how super is this supercomputer? Not especially super. The individual simulations just happen to run at about “real time” speed, which is kinda neat. Total throughput is around 1.4 rolls per second, making the supercomputer roughly equivalent to a single Pentium III at 250MHz, for this task. I figure I would need at least 90 nodes in the cluster to match my modest Core 2 desktop. Mission accomplished.

The large memory of the first node allows goodies like python and a web server to be included in its ramdisk, greasing the possibilities of some extra “master node” functionality. I already have a respectable python module for interfacing with the BetaBrite LED sign I happen to have in my strategic junk stockpile. With the LED sign the supercomputer can announce results itself, making it a completely independent system. With some basic CGI, a status web page could show detailed output and statistics and logs and all else, but I haven’t gone that far yet.

The feng shui of the operation is excellent: four machines each rolling four dice and celebrating instances of the particular roll 4-4-4-4. While aesthetically attractive, the arrangement and operation of the computer is itself arguably lucky, so there is a concern of contamination of readings of general luck when analyzing the supercomputer’s output. However, because any expected bias would be positive, I have no plans to address this.

A possible aesthetic improvement would be to develop a simulation which utilizes the four CPUs together to simulate a single set of four dice, with each of the nodes consistently devoted to processing the same die. No matter how cleverly designed, this type of operation would have abysmal performance compared to the one-sim-per-cpu setup which barely qualifies as productive in the first place. But it would be nifty, and that’s all that matters, really.